Exposing Spring AI Agents via the A2A Protocol: What Interoperability Actually Buys You

Spring AI's server-side A2A integration is stable enough to put in production, but the protocol is most useful at organizational boundaries, not as an internal RPC replacement. This post walks through what actually changes in a Spring AI codebase, where the sharp edges still are, and a practical decision framework for A2A vs MCP vs plain REST.

The Agent2Agent protocol moved into the Linux Foundation in 2025, and spring-ai-a2a picked up first-class autoconfiguration at the start of 2026. If you run a JVM stack, you now have a standard way to publish an agent so that something you do not own — a different team, a different runtime, a different company — can discover it and talk to it without a bespoke integration.

That is the pitch. The reality is narrower than the pitch. A2A is not a better gRPC and it is not a replacement for the agent tooling you already have inside your own service mesh. It is a boundary protocol, and treating it as one is the difference between a clean rollout and six months of leaky abstractions.

This post is about what actually changes in a Spring AI codebase when you expose an agent over A2A, where the protocol is still sharp, and how to decide when it is the right tool. I will keep the example minimal and Kotlin-first, because that is where most of my Spring AI work lives.

What A2A is really doing

Strip away the marketing and A2A is three things. First, a discovery contract: every A2A server publishes an AgentCard at /.well-known/agent-card.json describing its identity, its skills, and its capabilities. Second, a message exchange protocol over HTTP+JSON for sending structured prompts, receiving structured responses, and streaming partial results. Third, a deliberate commitment to opacity: the caller does not know whether the other side is a Spring AI agent, a Python LangChain agent, or a human with a very fast typing speed. Only the AgentCard and the wire contract matter.

The AgentCard is where most of the value sits. A skill is the unit of capability, and a capability declaration tells callers what inputs a skill accepts and what it returns. In Spring AI's implementation, this is emitted automatically from your ChatClient-backed agent, which is pleasant until you realize the AgentCard is not a contract test. It tells the caller what shape to send; it does not promise the agent will behave the same way next week. You still need your own compatibility discipline on top.

A minimal Spring AI A2A server in Kotlin

Here is the smallest realistic example — a single-file Spring Boot app that exposes a "summarize-release-notes" agent over A2A. It compiles and runs as-is with Spring Boot 3.4 and Spring AI 1.0.x with the spring-ai-starter-a2a-server starter on the classpath.

package com.example.releaseagent

import org.springframework.ai.a2a.server.A2aAgent

import org.springframework.ai.a2a.server.A2aSkill

import org.springframework.ai.chat.client.ChatClient

import org.springframework.boot.autoconfigure.SpringBootApplication

import org.springframework.boot.runApplication

import org.springframework.context.annotation.Bean

@SpringBootApplication

class ReleaseAgentApplication {

@Bean

fun releaseAgent(chatClient: ChatClient.Builder): A2aAgent =

A2aAgent.builder()

.name("release-notes-summarizer")

.description("Summarizes raw release notes into a 3-bullet executive digest.")

.version("1.0.0")

.skill(

A2aSkill.builder()

.id("summarize")

.description("Takes markdown release notes; returns a 3-bullet digest.")

.inputSchema(mapOf("type" to "object",

"properties" to mapOf("notes" to mapOf("type" to "string")),

"required" to listOf("notes")))

.handler { input ->

val notes = input["notes"] as String

chatClient.build()

.prompt()

.system("Return exactly 3 bullets. No preamble.")

.user(notes)

.call()

.content()

}

.build()

)

.build()

}

fun main(args: Array<String>) {

runApplication<ReleaseAgentApplication>(*args)

}Run it with ./gradlew bootRun. The interesting line is the handler lambda — it is a plain Kotlin function that receives the decoded input map and returns a string. Everything around it (the JSON envelope, the streaming hooks, the AgentCard generation) is handled by autoconfiguration. Hit http://localhost:8080/.well-known/agent-card.json and you will see the card Spring AI built from the skill definition.

The footgun in this snippet is subtle: version("1.0.0") on the agent is advisory. Nothing stops you from changing the shape of the summarize skill's output between releases while leaving the version string untouched. That is your problem, not A2A's.

The asymmetry nobody mentions

Spring AI's A2A server support is production-shaped. The client side — where you consume some other team's A2A agent from inside your Spring app — is still catching up. Discovery works, invocation works, but the ergonomics around streaming, partial failures, and mid-call tool invocation are evolving month to month.

What this means in practice: if you are rolling out A2A, expose first, consume later. Put your own agents behind A2A so external systems can reach them with a stable contract, but do not rewrite your internal agent-calls-agent code paths to go through A2A unless the other side is outside your trust boundary. Internally, keep using direct injection of ChatClient beans or whatever transport you were already using. You will revisit client adoption in six months when the APIs settle.

Auth is the thing to watch

The A2A spec defines how to declare auth requirements in the AgentCard but is deliberately thin about enforcing a specific trust model. You can say "this skill requires a bearer token"; the spec does not say anything about who issues that token, how it is rotated, or how multi-tenant isolation is enforced inside the agent.

For an internal agent this is fine — slot in whatever you already use, OAuth2 resource server, mTLS, an API gateway. For a cross-organization agent it is not fine. Two companies exchanging agents will quickly discover they need a shared identity provider, an agreed audience claim, a rate-limiting story, and a plan for what happens when the remote agent starts hallucinating and burning tokens on your account. A2A hands you none of that. Budget real design time for it.

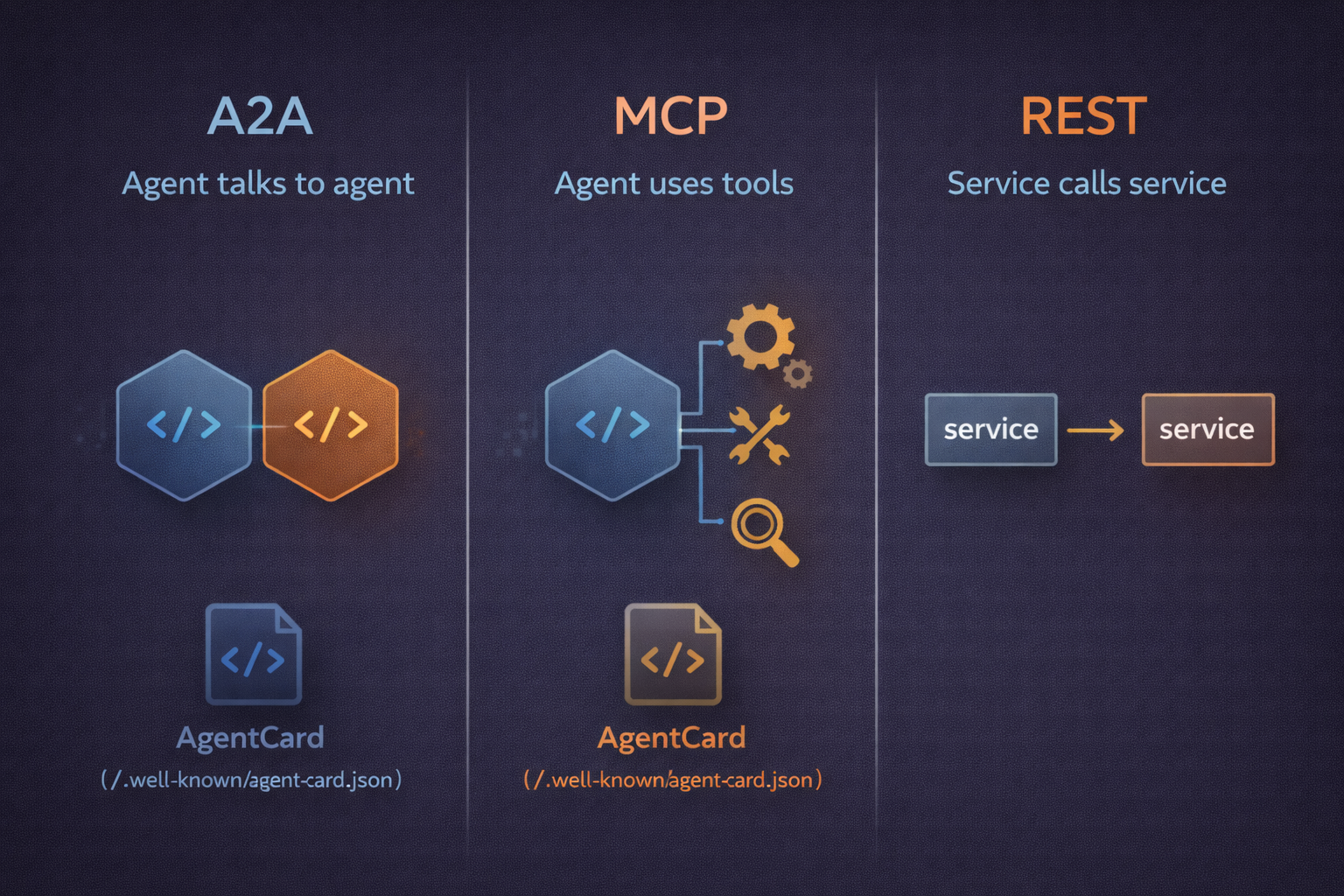

A2A vs MCP vs direct REST

The easiest mistake is treating A2A and MCP as alternatives. They are not. MCP is how an agent consumes tools and data sources — the agent is the client, the tool is the server. A2A is how an agent is reached as a peer — the agent is the server, and another agent is the client. A well-built system uses both: your Spring AI agent exposes an A2A surface outward, and internally it uses MCP to reach its own retrieval, search, and action tools.

Direct REST still wins when the other side does not need the planning or reasoning affordances of an agent. If you are asking a service for the current balance of an account, you want a REST endpoint, not an agent with a skill that summarizes the balance in natural language. The moment you add A2A in front of a deterministic workflow, you pay for non-determinism, token cost, and latency you did not need.

The decision rule I use: A2A when the other side is an agent and lives in a different trust domain. MCP when I need tools inside my agent. REST when the call is deterministic and the other side can be deterministic too. A message queue when the work can be asynchronous and backpressure matters more than immediacy.

What goes wrong in production

Three failure modes show up repeatedly. The first is AgentCard drift: your skill signature changes, the card updates automatically, and the caller's code breaks silently because they cached the old schema. Mitigate with explicit versioning in the skill id (summarize-v2), not just in the agent's version string, and with a deprecation window where both versions are served.

The second is cost blowup on the caller side. When you expose an agent over A2A, you have no visibility into how aggressively the other side will call it. A poorly written external client can loop on your agent, and every loop is a model call on your bill. Put per-caller quotas in front of the A2A endpoints before you publish, not after.

The third is observability gaps. A2A message traces and internal tool-call reasoning spans live in different planes unless you stitch them together. In Spring AI you get this mostly for free if you already have Micrometer tracing wired up, but only if you propagate the A2A taskId as a span attribute. Without it, a failed cross-agent workflow looks like two unrelated incidents in two different services.

When I'd actually reach for it

Reach for A2A when you are publishing an agent across an organizational boundary and you care more about interoperability than about optimizing a specific caller. Reach for it when you want your agent to show up in an external registry or be discoverable by agents you have never met. Reach for it when the ergonomic cost of keeping a hand-rolled REST-plus-OpenAPI-plus-auth setup in sync with a second team is higher than the cost of adopting a standard.

Avoid it for internal agent-to-agent calls inside one service mesh. Avoid it when the remote side is a deterministic API dressed up as an agent. Avoid it for latency-sensitive hot paths where the protocol overhead and model invocation will blow your SLO.

Takeaways

- Expose server-side first; defer client adoption until the spec stabilizes.

- Version skills in the skill

id, not just the agent version. The AgentCard will not save you. - Put rate limits and per-caller cost caps in front of any A2A endpoint before you publish.

- A2A and MCP are complementary. Use A2A at the outer boundary and MCP for tools inside the agent.

- Propagate the A2A

taskIdinto your tracing spans so cross-agent failures are debuggable. - Treat AgentCard auth declarations as documentation, not enforcement. Bring your own trust model.

Use it at boundaries. Avoid it as glue. Ship it behind quotas.

Related Posts

The Deterministic Backbone: Why Production AI Systems Are Moving Away From Fully Autonomous Agents

Fully autonomous agents are hard to bound, hard to test, and expensive to operate. A deterministic backbone with narrow agent steps gives you the control flow back while keeping the intelligence where it matters. Here is how to design, test, and migrate toward it.

Memory Evaluation: Measuring How AI Memory Decays Over a Project's Lifetime

Most AI memory benchmarks grade on recall and stop there. That hides the real failure mode: stale facts quietly poisoning the context window. Here is a lifecycle-based evaluation framework that tests recall, revision, and controlled forgetting across the change points every long-lived project goes through.